Check out the code for this project here

Implementing Google's PageRank Algorithm in Python

PageRank was the algorithm that lead to googles great success in the space of search engines. The core idea of the algorithm is that if a page links to another page it is effectively a "vote" for that page.

I build a python program that was an implementation of this algorithm as a project for Harvard's CS50’s Introduction to Artificial Intelligence with Python. This was a fun way to see how the software we use everyday actually works.n

The Random Surfer Model

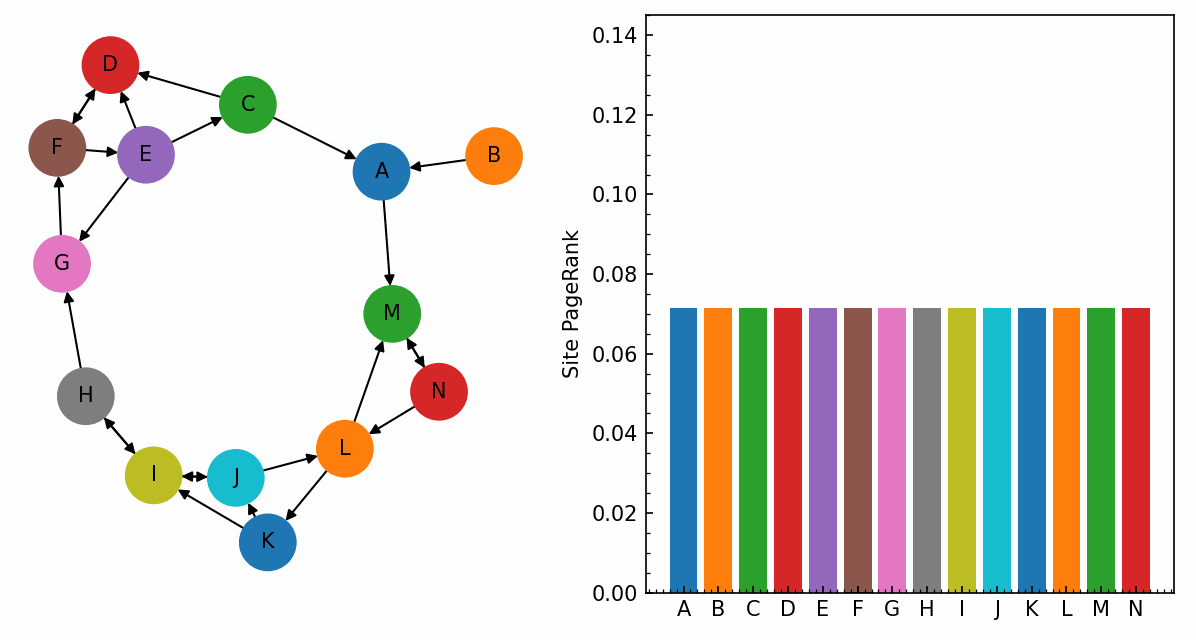

The way that the algorithm works is that it imagines a "Random Surfer" who is navigating the web. This imagines a hypothetical person who goes through pages and randomly clicks on links. They keep doing this forever and the pages that they end up visiting the most are weighting as the most important.

The algorithm also has a damping factor which normally has a value of 0.85. This ensures you don't have a pair of pages that link to each other and the random surfer just goes in an infinite loop. This damping factor is the chance they click a link on this page, if they don't click a link on this page they will be randomly teleported to a completely random other page.

If we have an edge case and we have a page that has no links to any other pages on it to prevent the algorithm from breaking we just have it so it links to every other page with an equal probability.

The Implementation

Here I implemented this algorithm in two different ways:

1. By Sampling

With this approach I have a sample_pagerank function that simulates the Random Surfer situation I went over above and takes 10,000 steps through a large corpus of pages. At each step we use Python's random module to decide where to go next. We keep a running count in a dictionary of how many times the Random surfer lands on each page. We then divide this by the total number of steps to give us an estimated PageRank.

2. By Iteration

In the other approach instead of simulating the random surfer I used an iterate_pagerank function that applies the recursive PageRank formula to every page in the corpus:

-

is the PageRank of the current page.

-

is the total number of pages in the corpus.

-

represents all pages that link to page .

This works by starting by assigning every page an equal rank of 1 / N. Then we iteratively update over in a while loop. The ranks will constantly shift but we have it so it keeps going and if it changes by less than 0.001 between iterations then we use a normalisation step to make sure all the ranks add to 1.

What's Next?

Here are some of the ways that the PageRank algorithm has changed since it was first created and if I were to go back to this project I would try and implement these:

-

Handling Link Farms: In the beginning people would try and artificially increase their PageRank by creating many fake sites that link to their page. In the modern systems there are penalty weights to detect these pages and lower their rank.

-

Topic-Sensitive PageRank: At the moment the Radom Surfer is completely random. One upgrade we could make would be to personalise the damping factor to favour the specific topics in the users history.