Check out the code for this project here

Training an AI for the Game of Nim

For this project I built an AI that teaches itself how to play the game of Nim using Reinforcement Learning. This project was developed for Harvard's CS50’s Introduction to Artificial Intelligence with Python .

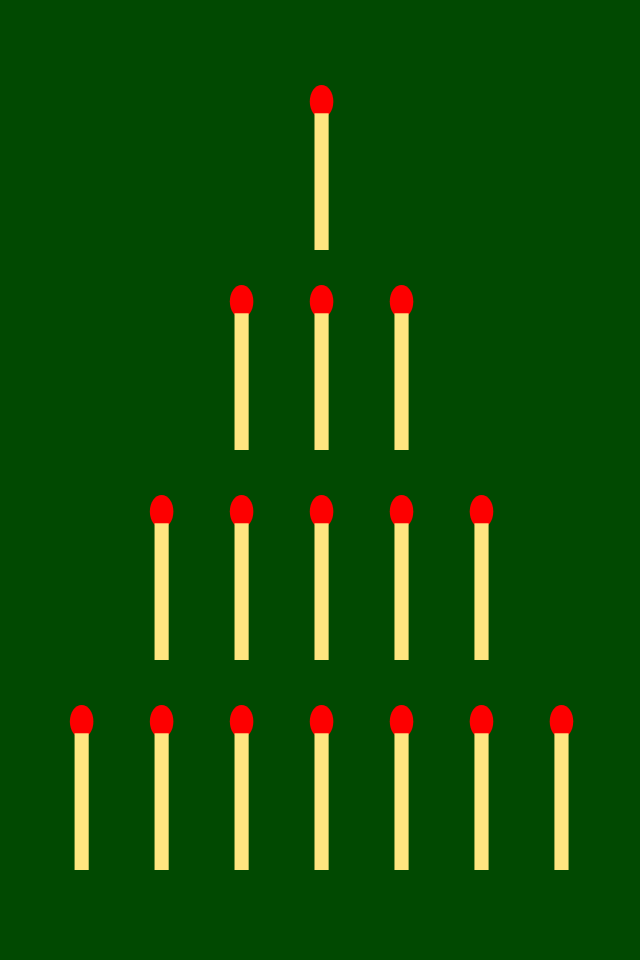

The Game of Nim

The way that the game of nim works is that we have multiple piles of items on the table as shown in the image above. Two players then take turns. If its someones turn they must choose one of the piles and can remove any amount of items from the pile. They have to remove at least one or can remove the whole pile. The game is over when there are no items left.

In the case of this project I implemented the "misère" version. This means that the person who takes the last item is the loser. You can also play versions where its the other way round and the person who takes the last item wins.

I have a Nim class where I have a move function which it handles the move logic. In this logic the current player makes a move and then the turn switches and if the board is empty the next player is the winner meaning the person who takes the last item is the loser.

The Architecture

To solve this game I used a kind Reinforcement Learning called Q-Learning.

Instead of giving the program a set strategy to win the NimAI class starts completely clueless. The class initialises an empty self.q dictionary that maps states to actions as it encounters them.

-

State (): This denotes the current layout of the board and is. represented as a tuple of the piles (e.g.,

(1, 3, 5, 7)). -

Action (): This denotes move the AI makes and is represented as a tuple of the chosen pile and how many items to remove (e.g.,

(1, 2)). -

Q-Value (): This represents how "good" it is to take a specific action in a specific state.

In the case the AI hasn't encountered a state-action pair before I have a get_q_value function that simply returns 0.

What's Next?

There are some limits to the current program and if I was to go back to this project here is what I would change:

-

Model Persistence: Currently the model has to train itself everytime that you run the program. If I could have a way to save the models state at the end this would not have to happen everytime and would speedup the program alot.

-

Adjustable Difficulty: Something that would be cool to add would be a feature that would allow the users to play against different difficulties i.e. play against models that had been trained on different numbers of games so were easier or harder to beat than the others.

This was a fun project that helped develop my understanding of python and reinforcement learning. It also taught me about the game of Nim which I was unaware of before this point and is fun to play with other humans.